Continued from ‘Procedural generated mesh in Unity‘, I’ll here show how to enhance the procedural generated meshes with UV mapping.

Continued from ‘Procedural generated mesh in Unity‘, I’ll here show how to enhance the procedural generated meshes with UV mapping.

UV Coordinates explained

UV mapping refers to the way each 3D surface is mapped to a 2D texture. Each vertex contain a set of UV coordinates, where (0.0, 0.0) refers to the lower left corner of the texture and (1.0, 1.0) refers to the upper right corner of the texture. If the UV coordinates is outside the 0.0 to 1.0 range, the coordinates are either clamped or the texture is repeated (dependent of the texture import setting in Unity).

The same way that each vertex is associated with a normal, each vertex is also associated with UV coordinates. This means that sometimes you need to duplicate the same vertex due to UV mapping.

Example

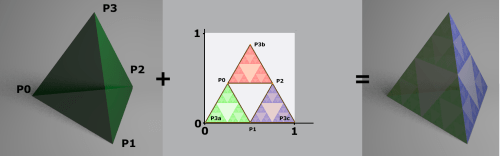

Enough theory, let’s take an example. Let’s see how a tetrahedron can be UV mapped. Basically what we are looking for is a way to go from 3D coordinates (the local mesh-coordinates: x,y,z) to 2D coordinates (u,v).

The most simple approach would be to remove one of the 3D coordinates; One example of this is to set the uv-coordinate to the x,z coordinate. However this approach is rare useful.

When a creating procedural generated mesh in Unity, you would most often want absolute control over where the UV coordinates are positioned.

In the example below, I’ll map a texture of a Sierpinski Triangle to the tetrahedron. The texture is chosen because it wraps perfectly around the tetrahedron.

Note that the vertex P3 is mapped to both P3a, P3b and P3c. Another thing to notice is that a lot of space in the texture is waisted.

In Unity you can create this UV mapping the following way:

MeshFilter meshFilter = GetComponent();

if (meshFilter==null){

Debug.LogError("MeshFilter not found!");

return;

}

Vector3 p0 = new Vector3(0,0,0);

Vector3 p1 = new Vector3(1,0,0);

Vector3 p2 = new Vector3(0.5f,0,Mathf.Sqrt(0.75f));

Vector3 p3 = new Vector3(0.5f,Mathf.Sqrt(0.75f),Mathf.Sqrt(0.75f)/3);

Mesh mesh = meshFilter.sharedMesh;

mesh.Clear();

mesh.vertices = new Vector3[]{

p0,p1,p2,

p0,p2,p3,

p2,p1,p3,

p0,p3,p1

};

mesh.triangles = new int[]{

0,1,2,

3,4,5,

6,7,8,

9,10,11

};

Vector2 uv3a = new Vector2(0,0);

Vector2 uv1 = new Vector2(0.5f,0);

Vector2 uv0 = new Vector2(0.25f,Mathf.Sqrt(0.75f)/2);

Vector2 uv2 = new Vector2(0.75f,Mathf.Sqrt(0.75f)/2);

Vector2 uv3b = new Vector2(0.5f,Mathf.Sqrt(0.75f));

Vector2 uv3c = new Vector2(1,0);

mesh.uv = new Vector2[]{

uv0,uv1,uv2,

uv0,uv2,uv3b,

uv0,uv1,uv3a,

uv1,uv2,uv3c

};

mesh.RecalculateNormals();

mesh.RecalculateBounds();

mesh.Optimize();

Other usages

One of the more advanced usages of this technique is used in ‘Escape from planet Zombie‘. Here the town was created procedurally on a sphere and UV mapped to one texture.

The texture had 3 building facades and 4 rooftops. The UV mapping was done dependent of the height of the buildings, so that a building could have from 1 to 4 floors.

The result of the UV mapping in ‘Escape from planet Zombie’:

Links

The full source code of the tetrahedron example can be found here:

The full source code of the tetrahedron example can be found here:

https://github.com/mortennobel/ProceduralMesh/blob/master/TetrahedronUV.cs

Also feel free to checkout the rest of the ProceduralMesh project:

https://github.com/mortennobel/ProceduralMesh

A playable demo of ‘Escape from planet Zombie’ can be found here:

http://www.wooglie.com/playgame.php?gameID=151

Hello ! Thanks for this so usefull tutorial. I try to wrap my head around those uv’s and make a texture atlas from muliple textures with the Texture2D.PackTextures that returns a Rect[]. It start making sense now. Thanks !

By: Ippokratis on April 20, 2011

at 10:08

Something that bothers me on the example is that there are redundant vertices (you repeat 3 times each vertex on the vertices array). If the object didn’t have any texture you would need 1/3rd of the vertices.

I found the same problem when trying to map a texture on a terrain (this blog helped me out, thank you)

Is this the only way to do uv-mapping or is there a way of having 1/3rd of the vertices but at the same time having a complex uv map?

By: Ezequiel Pozzo (@pozzoeRand) on December 4, 2011

at 01:46

You should think of it this way: You are serving the vertices (and their attributes such as UVs and normals) to the GPU in a way that it as easy for the GPU as possible to consume. This means redundant information.

There is no workaround for the redundancy in Unity (unless you are able to compute the UVs on the fly – but that is not a very general approach).

However for terrain generation you often want the same UV and normals for each vertex – and in that case there is no reason for repeating vertex positions.

By: Morten Nobel-Jørgensen on December 4, 2011

at 12:58

Hi!

The problem I see is that uv information is related to vertices (as you need to have as many uvs as vertices) instead of being related to triangle points. I guess that’s not specific to unity, right?

What I’m trying to do is texturing a terrain generated using marching cubes (triplanar texturing doesn’t work for me). For horizontal terrains I have one texture that needs to be tiled once per voxel. I have something like:

Vector3 p0 = new Vector3(0,0,0);

Vector3 p1 = new Vector3(1,0,0);

Vector3 p2 = new Vector3(1,0,1);

Vector3 p3 = new Vector3(0,0,1);

Vector3 p4 = new Vector3(0,0,2);

Vector3 p5 = new Vector3(1,0,2);

mesh.vertices = new Vector3[]{

p0,p1,p2,

p0, p2, p3,

p3, p2, p4,

p3, p5, p4

}

mesh.triangles = new Vector3[]{

0,1,2,

3,4,5,

6,7,8

}

Vector2 uv0 = new Vector2(0,0);

Vector2 uv1 = new Vector2(0.5F,0);

Vector2 uv2 = new Vector2(0.5F,0.5F);

Vector2 uv3 = new Vector2(0,0.5F);

mesh.uv = new Vector2[]{

uv0, uv1, uv2,

uv0, uv2, uv3,

uv0, uv1, uv2,

uv0, uv2, uv3

}

Note that only 1/4th of the texture is meant to be used for horizontal surfaces (the rest is for other parts of the mesh). This works perfectly, I had to read this article to understand I needed to do it this way.

However, it bothers me that I have to define vertices in a redundant way instead of doing:

mesh.vertices = new Vector3[]{

p0,p1,p2,

p3, p4, p5

}

mesh.triangles = new Vector3[]{

0,1,2,

0,2,3,

3,2,4,

3, 4, 5

}

This is ok. It works with solid colors. But I can’t define uvs correctly. This won’t work:

mesh.uv = new Vector2[]{

uv0, uv1, uv2,

uv3, uv1, uv2

}

Do you know other way of doing this? Thank you for the information.

By: Ezequiel Pozzo (@pozzoeRand) on December 4, 2011

at 20:53

It looks ok. Maybe you should consider only store terrain in your mesh; Then set your texture-mode to repeat and you should be able to save a few vertices (So the UVs in one direction should be 0.0, 1.0, 2.0, 3.0, 4.0, etc)

By: Morten Nobel-Jørgensen on December 4, 2011

at 21:54

Thank you for the tutorial…. Extremely useful….

By: Sreenath on December 13, 2011

at 15:37

This is a great tut, but how can we create a unique mesh each time?

This way, if I duplicate the tetrahedrom, then regenerate with different settings, they will both change.

By: Kurt on January 29, 2012

at 13:24

its my first time to encounter this and im new to uv mapping. how do you really place the texture in the generated mesh? all i see is just that uv mapping but i dont see how the texure is defined and how would you assign it to the mesh??

By: neoj on April 30, 2012

at 02:55

First take a look at http://en.wikipedia.org/wiki/UV_mapping . The UV coordinates points to a position in a texture. To make this position more general and independent of the actual texture resolution, the texture width and height are normalized to 1.0 (this means that a coordinate such as (0.5, 0.5) always points to the middle of the texture). It is up to you to decide how you actually want to map the texture to the mesh using UV-coordinates.

By: Morten Nobel-Jørgensen on April 30, 2012

at 08:23

For some “seat of the pants” orientation: This is very similar to drafting (drawing) patterns for creating sheet metal parts. The whole 3D thing is like forming very ductile sheet metal with a grain structure analogous to the polygons and deformations only occurring at the grain boundaries. The ubiquity of computers allows for more complicated 3D creations but they can all be “cut apart” and flattened in a known or purposely determined manner which then allows the pattern (texture) to returned to the 3D state.

By: lloyd sutfin on September 7, 2012

at 16:36

Hi, I wanted to know if I could have your permission to use the building texture file utilized in this article for my own 3D UV project? I would give full credit for the texture to you and link back to this article. Please let me know if this is permissable =)

By: MK on September 21, 2012

at 07:15

You are more than welcome 🙂 I’m looking forward to reading your article.

By: Morten Nobel-Jørgensen on September 21, 2012

at 08:16

Pretty little game, but awful gameplay. 😉

By: Caue Rego on December 5, 2012

at 15:05

Hi Morten,

pretty good blog, I’ve been reading your articles for a while (“reading” because I always understand a bit more each time I read).

Could you take a look at this question (http://answers.unity3d.com/questions/362969/texture-atlas-and-planar-uvs.html) I’ve just made at Unity Answers? I think it is a very easy thing to do but I can’t figure out. So, I thought you could help me.

By: Marcos Saito de Paula on December 12, 2012

at 18:03

Thank you. This is the first online tutorial I have seen where UV was actually defined as a 2D coordinate system, which made everything I have ever read about them suddenly click into place. Thanks for not assuming someone already knows what all the abbreviations are.

By: Tony on January 6, 2013

at 19:44

Thank you sir, this is the best tutorial I have found on this subject and it was very helpful in understanding this concept. Well done!

By: mothball on February 8, 2013

at 08:21

this stop me from beating around the bush…great tutorial

By: sdb on March 26, 2013

at 16:20

Thank a lot ! It’s quite hard to find some explanations about uv mapping, as any research leads to Maya/Blender/3DSMax tutorials. Thanks again !

By: sven.taton@gmail.com on March 26, 2014

at 15:12

I’ve been jumping between different tutorials and forums to try and generate a tetrahedron through javascript, but I keep getting stuff messed up. i was wondering if you could take a look at my code. The script for making primitives in the editor, just a little project. I wont post the whole code because its big, I’ll just post the important stuff. I’ll post the erros below this post as a reply.

@MenuItem (“Keenan’s Cool Tools / Create Primitive At Origin / Tetrahedron”)

static function CreatePyramid ()

{

//create an empty gameobject with that name

var Tetrahedron : GameObject = new GameObject (“Tetrahedron”);

//add a meshfilter

Tetrahedron.AddComponent(MeshFilter);

Tetrahedron.AddComponent(MeshRenderer);

//the four points that make a tetrahedron

var vert0 : Vector3 = Vector3(0,0,0);

var vert1 : Vector3 = Vector3(1,0,0);

var vert2 : Vector3 = Vector3(0.5f,0,Mathf.Sqrt(0.75f));

var vert3 : Vector3 = Vector3(0.5f,Mathf.Sqrt(0.75f),Mathf.Sqrt(0.75f)/3);

var vertices : Vector3[] =

[

vert0,vert1,vert2,vert0,

vert2,vert3,vert2,vert1,

vert3,vert0,vert3,vert1

];

var triangles : int[] =

[

0,1,2,

3,4,5,

6,7,8,

9,10,11

];

var uv3a : Vector2 = new Vector2(0,0);

var uv1 : Vector2 = new Vector2(0.5f,0);

var uv0 : Vector2 = new Vector2(0.25f,Mathf.Sqrt(0.75f)/2);

var uv2 : Vector2 = new Vector2(0.75f,Mathf.Sqrt(0.75f)/2);

var uv3b : Vector2 = new Vector2(0.5f,Mathf.Sqrt(0.75f));

var uv3c : Vector2 = new Vector2(1,0);

var uvs : Vector2[] =

[

uv0,uv1,uv2,

uv0,uv2,uv3b,

uv0,uv1,uv3a,

uv1,uv2,uv3c

];

//create a new mesh, assign the vertices and triangles

var mesh : Mesh = new Mesh ();

mesh.uv = uvs;

mesh.vertices = vertices;

mesh.triangles = triangles;

//recalculate normals, bounds and optimize

mesh.RecalculateNormals();

mesh.RecalculateBounds();

mesh.Optimize();

(Tetrahedron.GetComponent(MeshFilter) as MeshFilter).mesh = mesh;

var shader : Shader;

var tetraColor : Color = Color.white;

Tetrahedron.renderer.material = new Material (Shader.Find(“Diffuse”));

Tetrahedron.renderer.color = tetraColor;

}

By: keenanwoodall on July 15, 2014

at 18:36

Here’s the errors I get:

—————————————————————————————-

Mesh.uv is out of bounds. The supplied array needs to be the same size as the Mesh.vertices array.

UnityEngine.Mesh:set_uv(Vector2[])

KeenansCoolTools:CreatePyramid() (at Assets/Editor/KeenansCoolTools.js:118)

—————————————————————————————-

MissingFieldException: UnityEngine.MeshRenderer.color

Boo.Lang.Runtime.DynamicDispatching.PropertyDispatcherFactory.FindExtension (IEnumerable`1 candidates)

Boo.Lang.Runtime.DynamicDispatching.PropertyDispatcherFactory.Create (SetOrGet gos)

Boo.Lang.Runtime.DynamicDispatching.PropertyDispatcherFactory.CreateSetter ()

Boo.Lang.Runtime.RuntimeServices.DoCreatePropSetDispatcher (System.Object target, System.Type type, System.String name, System.Object value)

Boo.Lang.Runtime.RuntimeServices.CreatePropSetDispatcher (System.Object target, System.String name, System.Object value)

Boo.Lang.Runtime.RuntimeServices+c__AnonStorey19.m__F ()

Boo.Lang.Runtime.DynamicDispatching.DispatcherCache.Get (Boo.Lang.Runtime.DynamicDispatching.DispatcherKey key, Boo.Lang.Runtime.DynamicDispatching.DispatcherFactory factory)

Boo.Lang.Runtime.RuntimeServices.GetDispatcher (System.Object target, System.String cacheKeyName, System.Type[] cacheKeyTypes, Boo.Lang.Runtime.DynamicDispatching.DispatcherFactory factory)

Boo.Lang.Runtime.RuntimeServices.GetDispatcher (System.Object target, System.Object[] args, System.String cacheKeyName, Boo.Lang.Runtime.DynamicDispatching.DispatcherFactory factory)

Boo.Lang.Runtime.RuntimeServices.SetProperty (System.Object target, System.String name, System.Object value)

KeenansCoolTools.CreatePyramid () (at Assets/Editor/KeenansCoolTools.js:130)

—————————————————————————————-

Shader wants texture coordinates, but the mesh doesn’t have them

^^^^Just a notification to the log, not a yellow warning, or red error ^^^^

By: keenanwoodall on July 15, 2014

at 18:41

A few ideas: The order of assigning vertices, triangles and uvs to mesh data matters. First assign the vertices and then the rest. The other error is simple to use ‘Tetrahedron.renderer.material.color’ instead of ‘Tetrahedron.renderer.color ‘.

By: Morten Nobel-Jørgensen on July 15, 2014

at 18:57

[…] Nobel-Jørgensen, M. (2011, April 05). Procedural generated mesh in Unity part 2 with UV mapping. Retrieved January 25, 2016, from https://blog.nobel-joergensen.com/2011/04/05/procedural-generated-mesh-in-unity-part-2-with-uv-mappin… […]

By: R & D Blog – Week 2 – Part 1 | animationblog on January 30, 2016

at 18:21